Long Division and Euclid's Lemma

May 2011

Most of us first encountered long division in grade school. At the time it appeared to be nothing more than a mechanical process used to divide one number by another.

But long division is far more than a classroom arithmetic trick.

What we call long division is actually an implementation of the Division Algorithm, a procedure that traces its origins back to ancient Greek mathematics and is closely related to a foundational result known as Euclid's Division Lemma. This lemma guarantees that any integer can be uniquely decomposed into a quotient and a remainder:

$$a = bq + r \qquad \text{with} \qquad 0 \le r < b$$

In other words, long division is not merely a computational shortcut. It is a window into the internal structure of integers.

Division as a structural process

It is easy to overlook how remarkable the division algorithm actually is.

When we perform long division we are not merely computing a number. We are progressively revealing the internal structure of the dividend.

The procedure works digit by digit. At each step we determine how many copies of the divisor fit into the portion of the number currently under consideration. Whatever cannot fit becomes the remainder, and it is carried forward into the next step. In this way, division recursively decomposes the number, layer by layer. Each step exposes another digit of the quotient and another piece of the number's structure.

What makes this especially interesting is that the process can continue indefinitely. If the remainder never becomes zero, we can append zeros and continue the procedure forever. The algorithm allows us to examine the ratio between two numbers to any arbitrary level of precision, without approximation, without rounding. Unlike floating point arithmetic, which introduces errors at the limits of its representation, long division gives us exact answers as far as we care to look.

In this sense long division acts like a mathematical probe. It lets us explore the relationship between two numbers with whatever resolution we choose.

A quiet but powerful constraint

The division algorithm has a subtle but powerful property that is easy to miss.

At every stage of the procedure, the remainder is constrained within a bounded interval:

$$0 \le r < b$$

No matter how large the dividend becomes, the remainder can only take on a limited set of values. For a divisor of 7, the remainder is always one of {0, 1, 2, 3, 4, 5, 6}. For a divisor of 13, it is always one of {0, 1, ..., 12}.

This restriction is what makes the algorithm so stable. It guarantees that each step produces exactly one new digit in the quotient. And because Euclid proved that the quotient and remainder are unique for any given pair, the structure revealed by long division is not arbitrary. It is intrinsic to the numbers themselves.

This bounded remainder is also the reason that decimal expansions of rationals eventually repeat. There are only finitely many possible remainders. Once a remainder recurs, the entire sequence of digits that followed it the first time must follow it again. The repetend is born from the pigeon hole principle applied to the remainder sequence.

Mapping the quotient-remainder structure

These observations led me to construct a simple experiment.

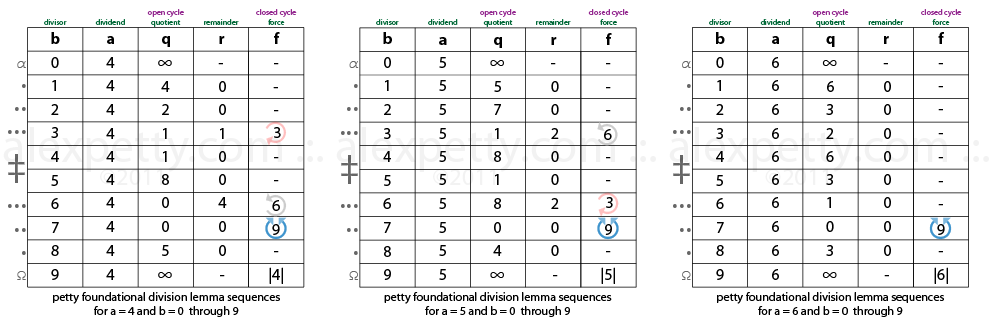

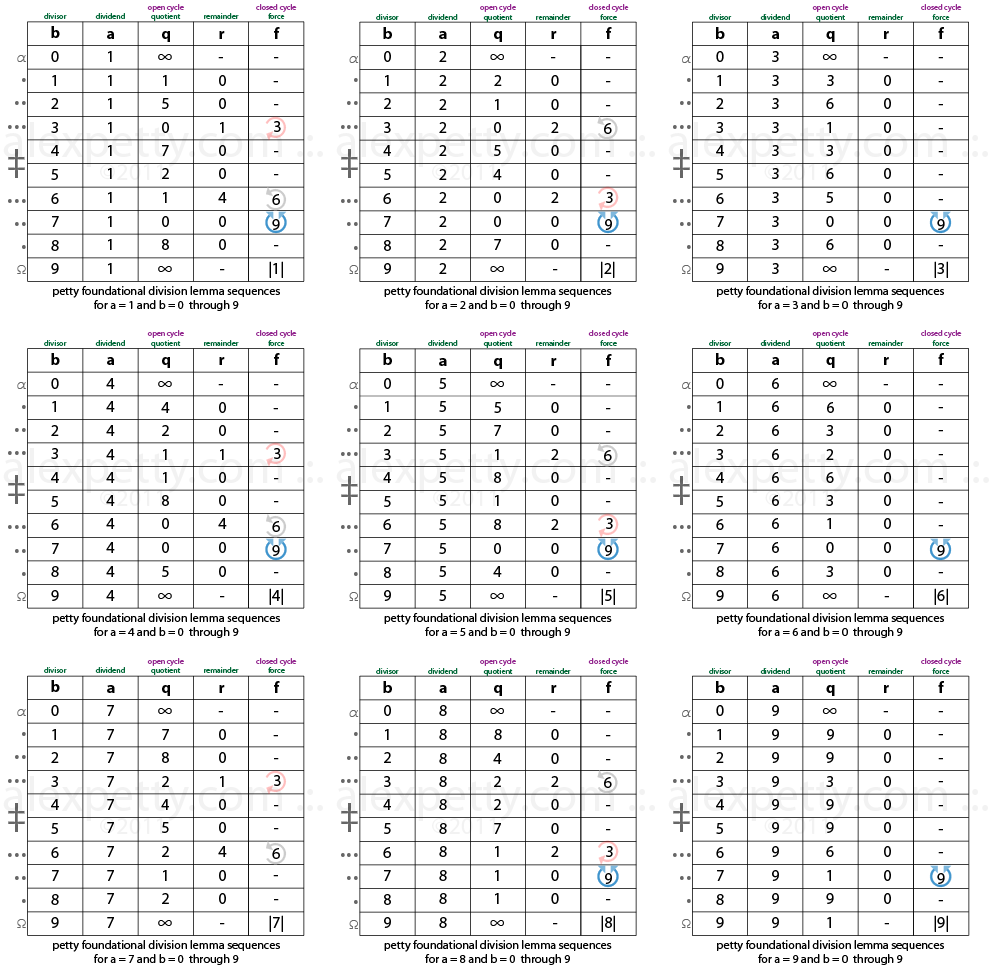

Instead of examining a single division problem, I generated the full set of quotient-remainder results produced by Euclid's division lemma for many small integers. I examined the combinations that arise when the dividend a ranges from 1 through 32 and the divisor b ranges from 0 through 9. For each pair (a, b), the division lemma produces a unique pair (q, r).

Plotting these results in tabular form produces the matrix shown below.

At first glance the chart looks like a simple table of arithmetic results. But when the values are examined collectively, regularities begin to appear. The quotients and remainders do not distribute randomly. They form repeating patterns that propagate across the table in recognizable ways.

This is not surprising if you think about it. The division algorithm is deterministic and the remainder is bounded, so the outputs must be structured. But seeing the structure laid out visually, as a field of values rather than individual computations, makes it tangible in a way the formula alone does not.

Compression and radial structure

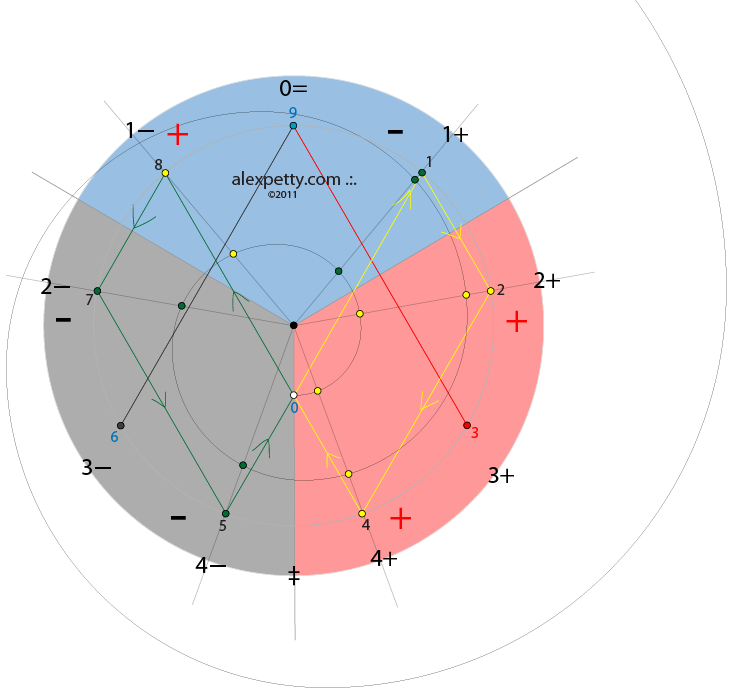

One way to see deeper into these patterns is through digit-sum compression.

If we repeatedly sum the digits of a number until only a single digit remains, many values in the matrix collapse onto a small set of radial lines. For example:

$$546 \rightarrow 5+4+6 = 15 \rightarrow 1+5 = 6$$

The number 546 lies on the 6 radial. Numbers that appear very different at full resolution turn out to share the same compressed identity. This is the digital root, and it is equivalent to reduction modulo 9.

When the results of the division lemma are examined under this compression, the patterns in the chart become considerably easier to see.

The boundaries of the cycle

Within this compressed representation, 0 and 9 behave like boundary values.

Zero is the origin of the number system. Nine is the completion of the cycle before the sequence rolls over into the next order of magnitude. Each time the sequence expands outward it eventually compresses back toward its radial origin. In a sense, the entire decimal system is a single structural cycle repeated at every scale.

Between these boundaries, certain digits play distinctive roles. The numbers 3, 6, and 9 appear as pivot points in cyclic behavior. The sequence 1, 2, 4, 8, 7, 5 (the powers of 2 under digit-sum compression) forms a repeating cycle of length 6. These are the same structures that appeared on the circle of nine in my earlier post, emerging here from a completely different starting point.

What this suggests

The deeper observation here is not that numbers possess hidden properties, but that simple mathematical rules generate surprisingly rich structure.

The division algorithm reveals one layer of this structure by decomposing numbers into quotient-remainder pairs. Digit-sum compression reveals another by showing how large numbers collapse back onto a small set of generators. The fact that these two perspectives produce overlapping patterns suggests that there is a single underlying organization connecting them.

I do not yet know how to formalize this connection. But I find it compelling that the most elementary operation in arithmetic, the one we learn before we learn anything else, contains this much structure when you look at it carefully enough.

Even something as ordinary as long division can open a window into the deeper architecture of the integers.

.:.

A note from 2026

March 2026

This post was written in 2011, when I was beginning to take seriously the idea that long division is not just a procedure but a lens. The "mathematical probe" metaphor turned out to be more apt than I realized at the time.

The bounded remainder constraint described here (0 ≤ r < b, and the pigeon hole argument for why repetends repeat) became the foundation of everything that followed. The digit function δ(r) = ⌊br/p⌋ at the center of the later papers is exactly the map that long division computes at each step: given a remainder r, produce the next digit. The entire theory of repetend alignment grew out of asking what happens when you track that map across all fractions with a common denominator, which is essentially the experiment described in this post, extended to its logical conclusion.

.:.